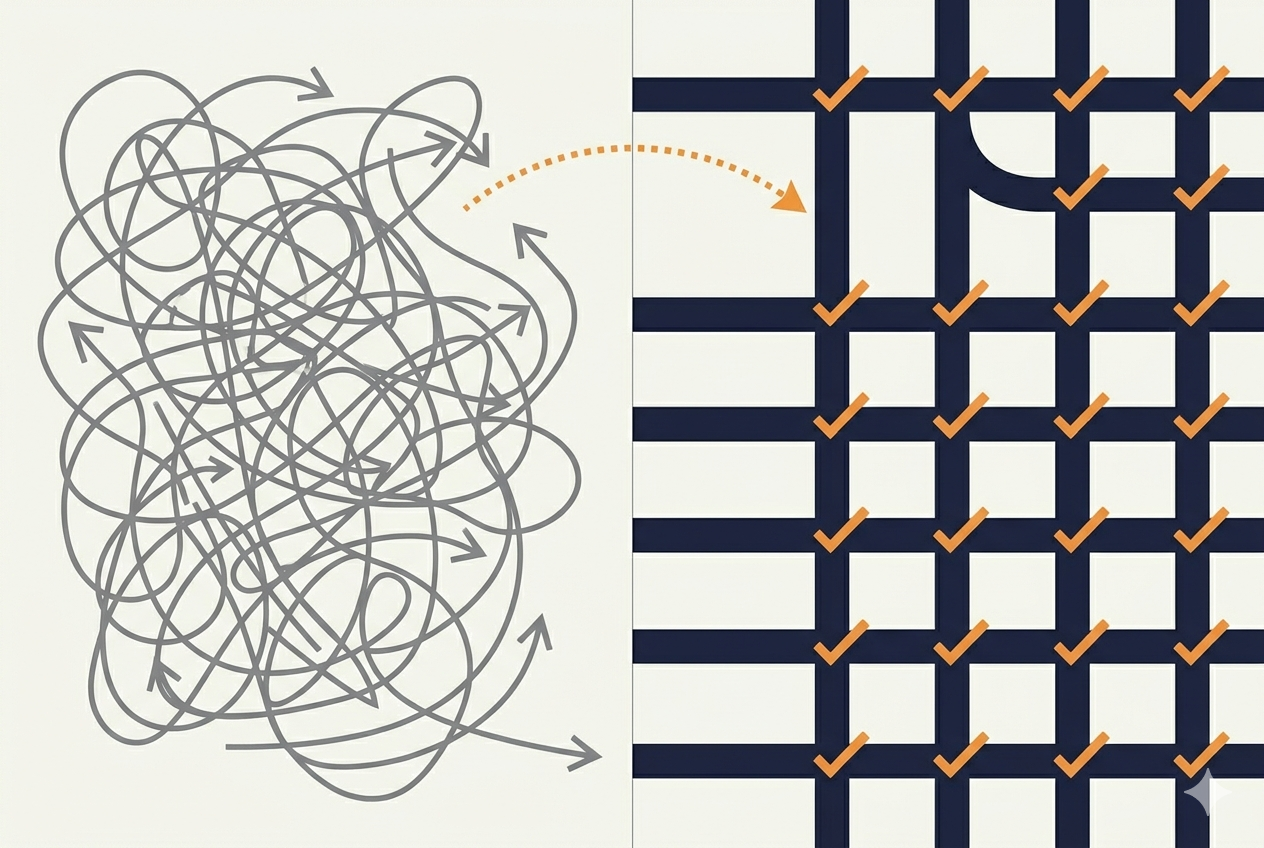

Agents are flexible. That's the problem.

You can give an agent a checklist, constraints, a detailed procedure. It will often follow them. But it will also improvise, skip steps, or misinterpret your intent. A 95%-accurate agent on a 20-step task succeeds only 36% of the time. At 90% per step, you're at 12%. Flexibility is the feature — and the failure mode.

The human in the loop isn't there to do the work. The human exists because someone has to know the domain well enough to catch when the agent drifts and pull it back.

80% of the engineering workforce will need to upskill through 2027. Only 28% of organizations plan to invest in upskilling. The money flows to the tools. The investment in the people using them doesn't keep pace.

Here are five habits that close the gap.

Observing

1. Know what right looks like

You can't catch an agent drifting if you don't know the destination.

A Harvard/BCG study (published in Organization Science, 2026) tested 758 consultants using GPT-4. On tasks inside AI's capability frontier, they completed 12% more work, 25% faster, at 40% higher quality. On tasks outside that frontier, AI users performed worse than those working without AI. They trusted the output without recognizing it was wrong.

Ethan Mollick calls this the "jagged frontier" — AI is excellent at some tasks and terrible at others, and the boundary is irregular and unintuitive. This frontier is narrowing as models improve, but the principle holds: you have to know the work well enough to evaluate whether the output is correct, not just whether it looks correct.

For leaders, the question isn't "are our people using AI?" It's "do our people know the work well enough to judge AI's output?"

2. Stay in the loop — detect when you've fallen out

Agents produce confident, fluent output. It reads well. It looks right. It's easy to stop checking.

This is a well-studied problem. In 1983, Lisanne Bainbridge published "Ironies of Automation" — arguing that the most automated systems require the most investment in operator training, not the least. When automation handles the routine work, operators lose the practice and situational awareness needed to intervene when it fails.

Parasuraman and Manzey extended this in 2010, finding that higher automation reliability paradoxically increases complacency. A system that's right 95% of the time is harder to oversee than one that's right 70% of the time, because the 95% system trains you to stop looking.

The habit is metacognitive. Regularly ask yourself: "Did I actually verify that, or did I just accept it?" If you can't remember the last time you caught the agent making a mistake, you've probably stopped checking.

Intervening

3. Interrupt early

When the agent is heading the wrong direction, stop it. Don't wait for it to finish a long chain of work before correcting course. The longer it runs unchecked, the more you have to undo.

Aviation learned this the hard way. After a series of fatal accidents caused by hierarchical cockpit culture — where junior officers didn't speak up about problems the captain was causing — the industry developed Crew Resource Management. The core principle: anyone who sees a problem has the responsibility to flag it immediately. Waiting is not deference. Waiting is risk.

When you see the agent going wrong, the instinct is to let it finish — it feels inefficient to interrupt. Override that instinct. Early correction is cheap. Late correction is expensive.

4. Correct actively

When an agent does something wrong, two instincts kick in: write more detailed instructions, or quietly fix the output and move on. Neither works.

More instructions usually miss the point. The problem often isn't ambiguity — it's that the agent interpreted your intent differently than you meant it. More words don't fix a misalignment in understanding. Instead, ask the agent to explain its reasoning. "Why did you do X instead of Y?" Often the answer reveals a misunderstanding you can address directly.

And silent corrections teach nothing. If you quietly fix a mistake without mentioning it, the agent has no signal that something went wrong. It will make the same mistake again. If you want a procedure followed, restate the boundary explicitly — every time, within the conversation context.

The best collaborations have two-way uncertainty signaling. You flag when the output looks wrong. The agent flags when the instructions are ambiguous. Configure your system prompts to encourage the agent to surface uncertainty rather than guessing. Neither side should guess silently.

Dario Amodei frames this well: powerful AI is "not humans out of the loop" but "humans with access to unlimited genius labor" operating within constraints that humans define and enforce. The defining and enforcing part is the job.

Systematizing

5. Graduate to scripts

If you're repeatedly asking an agent to do the same transformation — reformatting data, running the same checks, generating the same boilerplate — stop. Have the agent write a script instead.

You get the determinism and reproducibility of code with the flexibility of the agent that produced it. The agent was useful for figuring out the solution. Once it's figured out, lock it down. Scripts don't skip steps. Scripts don't improvise. Scripts don't need oversight.

Keep agents for the parts that require judgment — novel problems, ambiguous inputs, tasks where flexibility is actually the point. Use scripts for everything else. Know when to graduate from ad-hoc to formalized.

This is the meta-habit: knowing when something should stay flexible and when it should become rigid. The best AI collaborators aren't the ones who use agents for everything — they're the ones who know when to stop.

At a glance

- Know what right looks like — Domain expertise is the prerequisite. You can't catch drift you can't recognize.

- Stay in the loop — Monitor actively. If you can't remember the last time you caught a mistake, you've stopped checking.

- Interrupt early — Don't wait for the agent to finish going the wrong direction. Early correction is cheap.

- Correct actively — Ask why before rewriting instructions. Restate boundaries explicitly. Never fix silently.

- Graduate to scripts — Once you've solved a problem with an agent, lock it down in code. Keep agents for judgment.

The real bottleneck

46% of executives cite talent skill gaps as the main reason for slow AI tool adoption. Not the technology. Not the cost. The people.

These habits aren't about controlling the agent. They're about being good enough at your job to know when the agent isn't good enough at it. Observe the output. Intervene when it drifts. Systematize what works. Review where agents went wrong, share patterns that succeeded, and update your instructions as you learn.

The best AI collaborators aren't the ones who write the best prompts. They're the ones who know the work.

References

- Rework (2024). The Math That's Killing Your AI Agent. Towards Data Science.

- McKinsey (2025). Superagency in the Workplace: Empowering People to Unlock AI's Full Potential.

- Gartner (2024). Generative AI Will Require 80% of Engineering Workforce to Upskill Through 2027.

- Dell'Acqua, F. et al. (2026). Navigating the Jagged Technological Frontier. Organization Science.

- Mollick, E. (2023). Centaurs and Cyborgs on the Jagged Frontier. One Useful Thing.

- Mollick, E. (2025). The Shape of AI: Jaggedness, Bottlenecks and Salients. One Useful Thing.

- Bainbridge, L. (1983). Ironies of Automation. Automatica, 19(6), 775–779.

- Parasuraman, R. & Manzey, D. (2010). Complacency and Bias in Human Use of Automation. Human Factors, 52(3), 381–410.

- NASA (2018). Crew Resource Management: Principles and Practice. NASA Technical Reports.

- Amodei, D. (2024). Machines of Loving Grace.